2025

OBCache: Optimal Brain KV Cache Pruning for Efficient Long-Context LLM Inference

Yuzhe Gu, Xiyu Liang, Jiaojiao Zhao, Enmao Diao

Under review. 2026

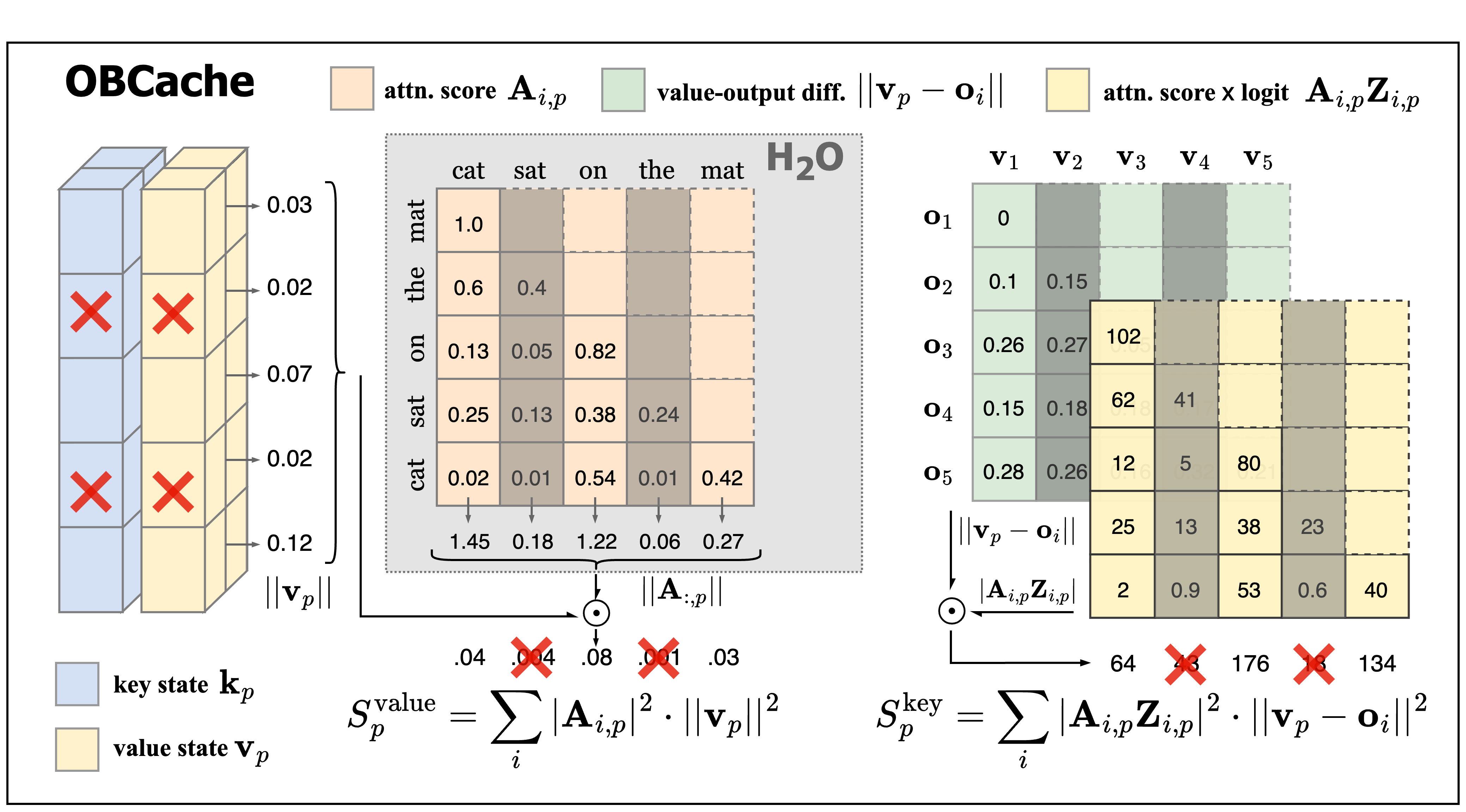

Optimal Brain Cache (OBCache), a principled framework that formulates KV cache eviction as a layer-wise structured pruning problem. OBCache quantifies token saliency by measuring the perturbation in attention outputs induced by pruning tokens, with closed-form scores derived for isolated keys, isolated values, and joint key-value pairs.

OBCache: Optimal Brain KV Cache Pruning for Efficient Long-Context LLM Inference

Yuzhe Gu, Xiyu Liang, Jiaojiao Zhao, Enmao Diao

Under review. 2026

Optimal Brain Cache (OBCache), a principled framework that formulates KV cache eviction as a layer-wise structured pruning problem. OBCache quantifies token saliency by measuring the perturbation in attention outputs induced by pruning tokens, with closed-form scores derived for isolated keys, isolated values, and joint key-value pairs.